There is an old saying attributed to Prince Bismarck, the Iron Chancellor of Germany, that “to retain respect for sausages and laws, one must not watch them in the making.”

Amusing as the saying may be, accountability requires transparency. And the current UK Covid-19 Inquiry into Core UK Decision-Making and Political Governance is giving an often painful insight in to the Governments decision-making processes.

Decision-making is studied by multiple disciplines, from statistics to psychology to economics. Can we learn anything from these studies that can help us understand more about the decision-making of the UK Government in response to the enormous challenge of Covid.

In this post I will use two frameworks from the field of decision analysis that I have used to support decision-makers from the shop floor to the board room. These frameworks have helped me put the evidence into context and, I believe, point the way to ‘lessons learned’.

These are just my initial thoughts so let me know in the comments what you think.

What is Decision Quality?

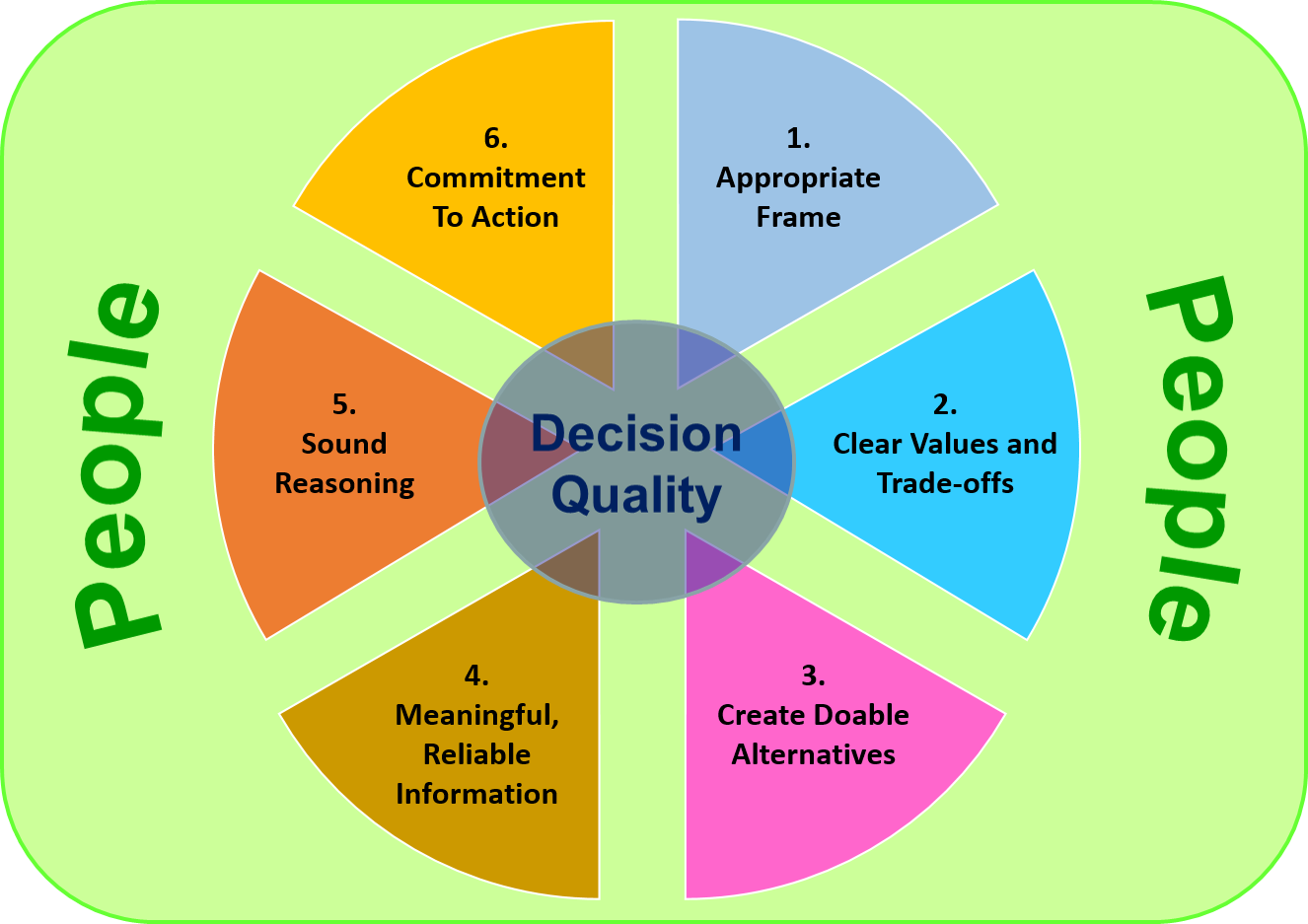

The discipline of decision analysis covers a wide area, but one of the approaches that I find most useful comes from the work of Professor Ron Howard of Stanford University. As part of his work he identified six requirements for a high quality decision, and these are shown on the following diagram.

As you can imagine there is a lot to unpack in each of these requirements but before going to the detail let me make a few general points. First the six requirements are linked and do not stand in isolation. Imagine them as a chain linked together, where the overall decision quality is only as good as the weakest link. Secondly, although they are shown in sequence, any decision-making process is iterative and will loop back to change or update any of the requirements.

Finally, the chart does not include any reference to whether the decision was a success or not. This may seem very strange at first but is deliberate. To explain why let’s take a real life example of someone who is driving when their mobile phone rings. They don’t have ‘hands free answering’ so have to decide if they should take their hands off the steering wheel and eyes off the road to answer the call. Let’s imagine that they answer the phone, have the conversation, and continue their journey with no problem. Does that mean it was the right decision? Or if they take the opposite decision and decide not to answer the phone and then have an accident. Does that make it a bad decision?

From this example I hope that you can see that bad quality decisions can have good outcomes and good quality decisions may have bad outcomes. That doesn’t mean that we shouldn’t aspire to make the highest quality decision possible but measuring the quality of a decision based on its outcome is not always appropriate. Finally, it’s not possible to use the outcome to judge the decision’s quality for the simple reason that you don’t not know the outcome at the time of the decision. That’s why the six requirements are considered independently of the outcome.

When providing evidence to the Covid Inquiry, witnesses often mix outcomes of decisions with comments on the decision-making process. Indeed the questions put to them frequently frame the discussion in this way. Although it’s difficult to separate outcomes from decision quality I think it’s important to try to do so.

Let’s now move to the six requirements that can be used to judge decision quality at the time we make the decision. For each I will give a short description together with some ‘failure modes’ that have been seen in the evidence provided at the Covid Inquiry.

1. Appropriate Frame.

The frame of a decision specifies the problem or opportunity at hand. It defines why we are making the decision; the scope (what’s included and excluded); and the perspective (what to take as given and how to approach the problem).

Failing to agree an appropriate frame can set the decision-making process on the wrong path from the start. This clearly happened with Covid where the problem was framed as a flu pandemic which led to the initial approach of contain, delay, research and mitigate. An approach that failed and had to be rapidly changed leading to delays in taking action.

2. Clear Values and Trade-offs.

Values are what we aim to achieve with our choice. They are what we want and care about and are used to assess the merits of each alternative. Often we want more than one thing from a decision, especially when many parties are involved. When one alternative provides everything wanted then the decision is easy. But this rarely happens and decision-makers must make trade-offs among different values.

Here the evidence from the Covid Inquiry is clear. Many witnesses have stated that in the early stages of the pandemic and through most of 2020 they did not understand what the government aims were, and how willing they were to make the trade-offs between the health benefits of interventions to control the pandemic and the socio-economic costs these would bring. The government, or rather the then Prime-Minister, would rapidly swing from prioritising the economy to prioritising the health service. In the absence of clearly stated values and trade-offs the teams supporting the decision-makers found it very difficult to provide effective support. In addition, they often had to make their own value judgements which resulted in tensions within the supporting teams.

In contrast, 2021 saw greater clarity from the government. There stated aims were clear. The aims were to protect the population through rapid vaccination and ‘no further waves of infection’. This clarity lead to a well managed staged exit from the third lockdown characterised by the ‘Data not Dates’ approach

3. Create Doable Alternatives.

Alternatives describe what we can do and you cannot make a good decision without good alternatives. What is also important is that you have a range of alternatives that are sufficiently different to offer a real choice. Each alternative will have unique risk, reward and success possibilities. Often decision-makers will focus on one alternative that is easily accessible, they are familiar with, or which they have some experience of.

Here we see that at the early stages of the Covid pandemic there was an issue with the role of SAGE (Scientific Advisory Group for Emergencies), the expert forum tasked with providing scientific advice to the government. Their role was reactive rather than pro-active. Government policy-makers decided on the alternatives and asked SAGE to evaluate their potential impact. Several members of the SAGE modelling group offered alternative approaches based on their expert knowledge of managing pandemics but these were not discussed within SAGE or presented to the Government. Once again, this led to tensions between some members of SAGE sub-groups.

We will see later that a better approach would be for Government decision-makers to engage in a dialogue with the expert teams when setting out the doable alternatives.

4. Meaningful, Reliable Information

Meaningful information is anything we know or would like to know that is relevant to selecting among the alternatives. It should be unbiased and come from trusted sources. Without relevant and reliable information, decision-makers must fly in the dark.

However there are two problems here - timeliness and uncertainty. Most decisions are time bound and often information is incomplete. There is a risk that ‘paralysis by analysis’ can lead to delayed decisions especially for difficult decisions. Secondly, all relevant information is drawn from the past, but decisions are about the future which is inherently uncertain. Decision-makers need to have an understanding of uncertainty and ways of assessing its impact when making decisions.

This is a very challenging area when dealing with a pandemic. Important information about the nature of the virus (infectiousness and infection fatality rate) was rapidly evolving during the first two months of 2020. Also surveillance systems in the UK were not providing reliable information on the spread of the virus. Undoubtedly, improvements could be made particularly in the area of early surveillance. However, there will always be a role for expert interpretation of the information at the early stage of any pandemic.

A final important point is that we have yet to see at the Covid-19 Inquiry the economic information used as part of the decision-making process. It will be interesting to see if went through the same rigorous interrogation that the scientific information received through the SAGE process.

5. Sound Reasoning

The previous requirements help us to understand what is valued, what can be done, and what is known. Sound reasoning pulls these together and explains why the alternative was chosen. Sound reasoning leads to conclusions that can be articulated and defended through rational argument.

Here the evidence from the Covid Inquiry to date shows a highly chaotic decision-making process driven by personalities and internal politics. These are not the characteristics of sound reasoning being used for making decisions. The consequence can be seen in the evidence given to the Covid-19 Inquiry on Oct 11 from Professor Tom Hale, lead investigator for the Oxford COVID-19 Government Response Tracker. Where he reports that compared to other equivalent governments, the UKs response in 2020 was more of a ‘roller-coaster’. The behaviour he highlighted was that the UK implementing control measures later and consequently more stringent than other countries, followed by greater relaxations resulting in another cycle of delayed and more stringent measures.

6. Commitment to Action

Decisions should result in action and it’s important that a commitment is made to take action. Almost always those making the decision are not those implementing the decision, and this is where clarity is key.

Too often those implementing a decision are not clear on the action to take and this leads to confusion. Furthermore, a lack of clarity can allow implementers to pursue their own ‘pet’ alternatives which are not necessarily consistent with the decision-makers aim. Involving key members of the implementation team in the decision-making process can help overcome these issues.

It’s difficult to judge from the Covid-19 inquiry how well this requirement was met. However, the absence of operational knowledge from public health experts in policy making leading to later implementation problems has been noted. This was particularly the case for the implementation of the contact tracing system.

In general, engaging operational experts early in the decision-making process is important both to confirm that the alternative solutions considered are ‘doable’ and ensure commitment to action.

A Better Process?

For major challenges decision-makers need support and project teams are usually set up to help. As we have already seen, clarity across the six dimensions of Decision Quality are vital for a high quality decision. One way to achieve this clarity is through dialogue and the following schematic shows an idealised process to achieve clarity.

The word dialogue may seem strange here but it is important. Dialogue is more than communication and it’s certainly not a debate between two opposing views. Rather it is where the decision and project team agree a shared position that they can move forward with. This is not always easy, especially when there are strongly held opposing views. Often independent facilitators may be required to help teams agree a shared position.

Recommending a decision process for Covid is beyond the scope of this post, but it’s important to note that the Project Team needs to have representation from all key stakeholders in the decision. For Covid, this would be much wider than the scientific community and would include those representing social and economic impacts. Indeed SAGE would be an example of a support team along with support teams representing the economy and other important sectors.

Although the schematic shows dialogue happening across each of the dimensions the first two areas are probably the most important to get a shared position. Without these solid foundations then the downstream steps are not likely to succeed.

To conclude

If you got this far then thanks. I hope that the frameworks I have presented provide a helpful way of thinking about decision-making in the context of the UKs response. I am certainly not saying that all government decisions should follow the processes I’ve outlined but I think that there are lessons that can be learned by taking a systematic view of decision quality.

Any comments would be much appreciated.